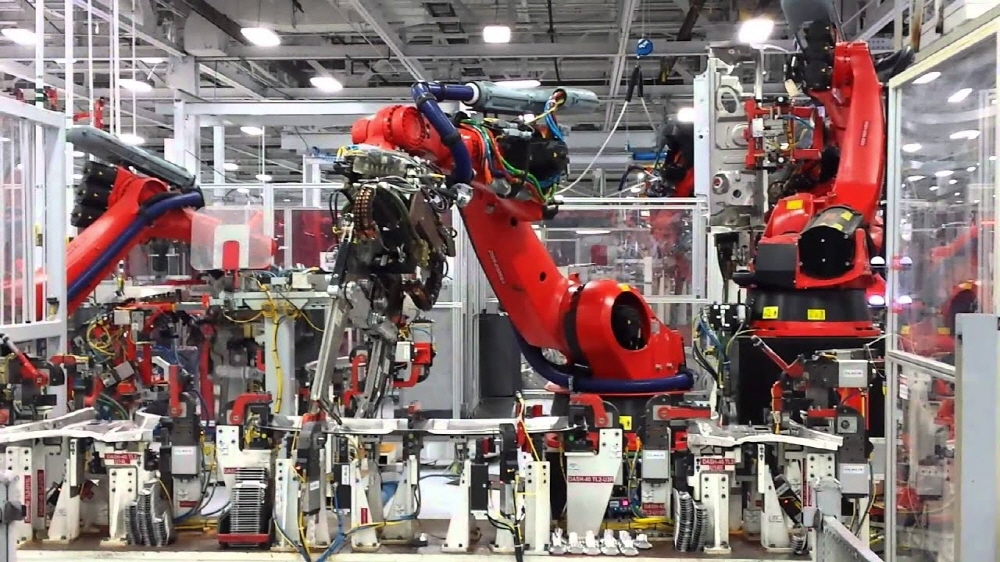

In 2017, Tesla announced its goal of producing 5,000 Model 3s per week. Despite concerns, Elon Musk has sworn that robotic assembly lines will be a way to speed up production and lower costs. As of the fourth quarter of 2018, a year and a half later, Tesla was shipping 91,000 units. However, the increase in the number of production units could not be solved by Elon Musk’s original idea of an automated assembly line.

As for why automation didn’t work, Elon Musk points out one challenge. Robot vision (think computer vision). In other words, software that controls what a robot on an assembly line sees as an object to determine its behavior. Unfortunately, the robots on the assembly line at the time couldn’t handle the complexities between car frames and whether things like bolts and nuts were pointing in unexpected directions. Whenever this problem occurred, the assembly line was stopped. In the end, it became much easier to solve problems only after replacing robots with humans in numerous assembly processes.

Computer vision, which can be called a comprehensive name for robot vision, can also be seen as a border between AI technology and innovative applications to encompass various industries that exist everywhere. The progress made in this area is impressive. The elements necessary for the realization of Elon Musk’s automobile assembly line are also beginning to emerge. The key is that computers and robots must be able to reliably handle most unexpected events such as bolts and nuts that occur in the real world.

Computer vision reached a turning point in 2012 when CNN (Convolutional Neural Networks) was applied. Until 2012, computer vision solutions were merely an attempt to mathematically describe image features and set of rules that manually defined algorithms. These are selected by humans and combined with computer vision research to specify objects such as bicycles, stores and faces to be recognized.

But with the rise of machine learning and artificial neural network technology, all of this has changed. It is possible to develop an algorithm using a large number of learning data that can automatically read and learn image features. The real effect of this is, firstly, that solutions are much more powerful, and secondly, that creating superior solutions has come to rely on high-quality, mass-learning data.

Nowadays, new methods such as Generative Adversarial Networks (GANs) can significantly reduce the amount of training data required to develop high-quality computer vision models and the time or effort required to collect them. In this way, AI can identify exceptions faster and at a much higher rate. Humans can evaluate these exceptions and reconsider their solutions.

This new approach is rapidly expanding the realm of computer vision in terms of applicability, robustness and reliability. It will not only solve the production challenges Elon Musk suffered from, but will also push the boundaries in a number of important applications.

Examples include production automation, face detection, medical imaging, driver assistance or automation, agriculture, and real estate information. Considering these advances, there will come a day when Elon Musk’s thinking itself will no longer be wrong. It may have simply been that such a vision was preceded by a year or two too soon. AI, computer vision, and robots are all approaching a turning point in accuracy, reliability and efficiency.

Add comment