The autonomous vehicle has the ability to grasp the situation several times more than the human being through the camera and the sensor. However, the detection of the situation is still late or does not work well, causing an accident. Even if you collect good information around you, you may have this problem if you have not learned how to do it or if you are learning.

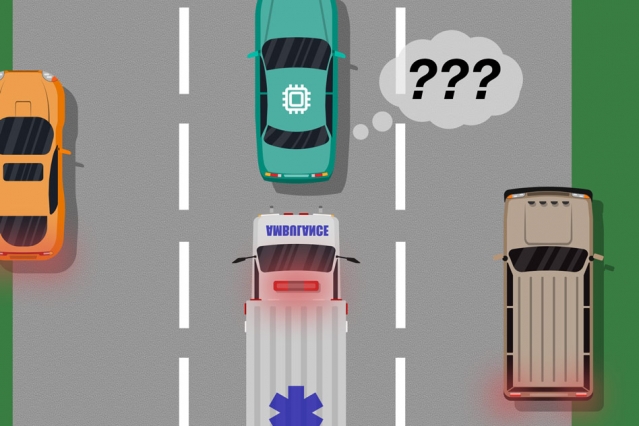

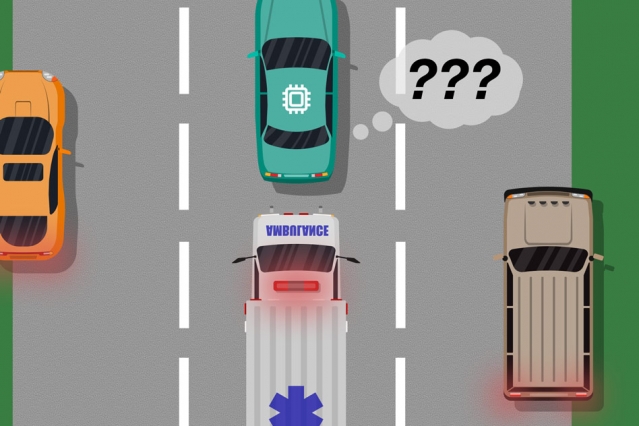

Microsoft and MIT collaborators have developed a learning model to get rid of this box with autonomous AI. At present, in the development of autonomous navigation system, AI is taught basic driving method by using some virtual space simulation. However, this method does not deal with situations that are not assumed. For example, if a large white trailer traverses a road, it can not identify it as a trailer and mistakes it as a blank space, or an ambulance blinking red warning light on the highway may cause errors such as stopping on the shoulder.

In a paper presented at an international conference on the autonomous navigation multi-agent system of AI in 2018, AI learned how to respond to maneuvers when an unexpected situation arises and discovered a blind spot in AI autonomous driving I have explained the model to do.

Applying this model allows real-time drivers to learn the response that the driver took during an actual road while driving and calibrate the AI. In simple terms, for example, if the driver takes over driving when the AI has left the road lane for some reason, the AI can recognize that something is wrong and learn the response.

The team explains that they can overdrive autonomous vehicles and prevent them from mistakenly believing they are safe under any circumstances. In addition to identifying situations that AI algorithms can not handle, it also calculates probabilities to determine response patterns and determines which one is the safest and the most risky.

Even if 90% of the total driving time by the autonomous driving system is safe, it is meaningless if an accident occurs in the remaining 10%. This shows that AI still needs to learn how to deal with it. Unfortunately, this model is just after the paper was published, so we have only tested the simulation with limited parameters and relatively simple conditions. The team should test the car in the future.

Nonetheless, autonomous vehicles will be more practical if these models work well. At present, autonomous vehicles have a problem in responding to roads where snow is piled up and lines are not visible yet. However, if this model works well in this difficult situation, the possibility of the appearance of autonomous driving vehicle, which is good at driving at the level of a pro driver, is likely to become a reality. For more information, please click here .

Add comment