To protect themselves from natural enemies, herbivores stand up as soon as they are born and begin to walk a few hours later. Google is developing an AI robot that wakes up and starts walking by self-learning without borrowing human hands. Autonomous machines, represented by autonomous vehicles, are nearing the stage of practical use, but the AI they use uses what they learned beforehand. On the other hand, algorithms that learn behavior patterns on their own without anyone’s help are not yet common. The Google research team is focusing on developing a robot with this self-learning ability through a new project.

It is said that the quadruped robot can walk forward and backward within 2 to 3 hours without knowing anything, and learn to change direction left and right. The Robotics at Google team discovered a way to enable learning a year ago by tuning existing algorithms.

What I often use when doing robot reinforcement learning is to model a virtual environment on a computer, move the virtual robot there to learn to some extent, and then transfer it to a real robot algorithm. Thus, it is possible to reduce the possibility of physically destroying the prototype-stage robot. Since it is just a virtual environment, it is difficult to reproduce slopes, uneven ground, sand, etc. just like real nature, so it is difficult to take a lot of effort and time in the stage of training AI.

From the beginning, the research team trained the robot algorithm in a real environment. The algorithm has been improved so that you can learn efficiently with fewer attempts and failures. As a result, the robot was able to stand up and walk around itself in two hours, and responding to deformation environments such as physical stepped stairs and uneven slopes was faster. However, in the case of complex terrain, unlike just getting up and walking, it is said that it was necessary to act as a human nanny several times.

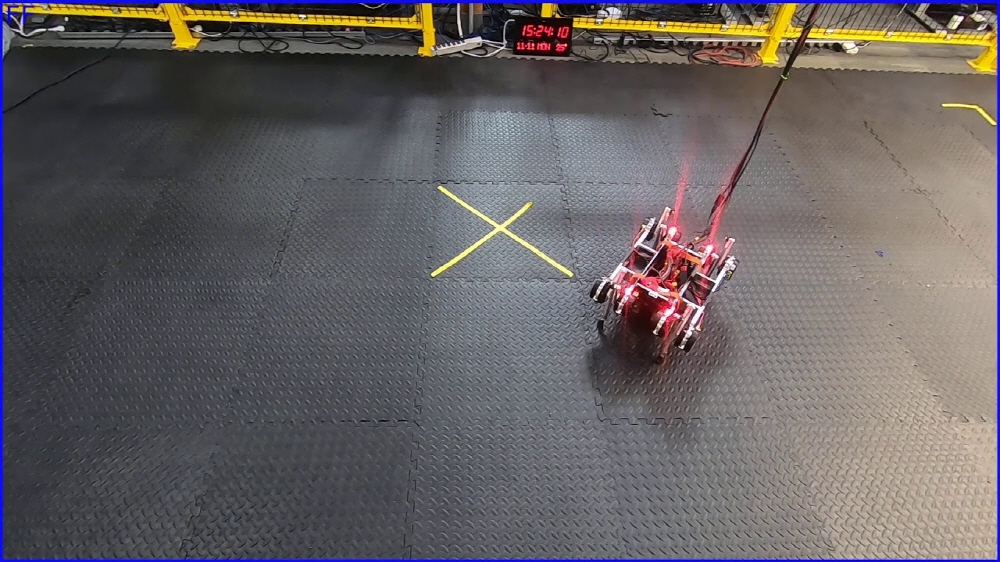

So, the research team created a model garden surrounded by walls so that the robot could learn to walk several types of terrain at once. When a robot is blocked by a wall, it changes its direction and starts walking again, improving learning efficiency.

In addition, a hard-coded algorithm has been added to allow the robot to stand up again when it is overturned because it may fail the first time it attempts to act on an unknown terrain. As a result of adding these adjustments, the robot eventually learned how to walk autonomously and learn a variety of grounds from flat ground to mats with many gaps in the mattress without human involvement. This is an important milestone for making robots more convenient in the future. However, the robot currently uses a motion capture system using marked objects to know the terrain, which cannot be applied to the real world.

Nevertheless, the research team intends to accumulate knowledge of robotics that will be active in each field by applying new algorithms obtained through research to various robots. Most of the environments we know are built by humans, and humans walk on their feet. Therefore, the reason for the research of the research team is that robots must use their legs to walk in the human world. Related information can be found here .

Add comment